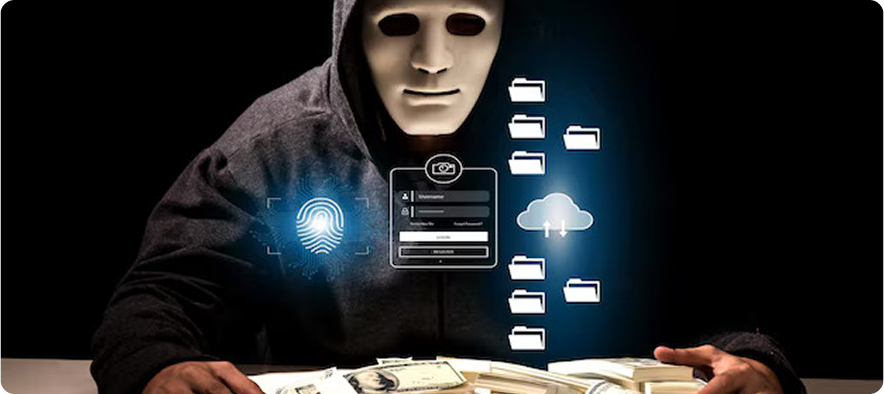

In an era where artificial intelligence is rapidly reshaping industries, the concept of “evidence” is undergoing a profound transformation as document verification is more about authenticity. Nearly half of investigators report encountering digital evidence in 80–100% of cases, which can be easily manipulated. How? Due to the rise of deepfakes. From identity verification and financial transactions to legal documentation and digital communications, the way we collect, validate, and trust evidence is no longer what it used to be. Traditional verification methods, once considered robust, are now being challenged by the sophistication of AI-powered manipulation and fraud, making deepfake detection in documents a necessity.

As AI continues to evolve, organizations must rethink how they approach evidence verification, not just as a compliance requirement but as a strategic necessity for trust, security, and resilience.

The Changing Nature of Document Verification

Historically, evidence was tangible, static, and relatively easy to authenticate. Physical documents, handwritten signatures, and in-person verification processes created a strong chain of trust. Even in the early days of digitization, scanned documents and basic identity checks were sufficient.

Today, however, evidence is predominantly digital—and increasingly dynamic. Digital identities, online transactions, remote onboarding, and virtual interactions have become the norm. While this shift has brought efficiency and scalability, it has also introduced new vulnerabilities.

AI has enabled the creation of highly convincing fake identities, synthetic media, and manipulated documents. For example, deepfakes can replicate human faces and voices with startling accuracy, making it difficult to distinguish between genuine and fabricated evidence. Deepfake scams caused approximately $1.1 billion in global losses in 2025. Similarly, AI-generated documents can mimic official formats, logos, and signatures, bypassing traditional verification checks.

Thus, the document verifications for evidence are considered as a critical process

The Rise of AI-Powered Fraud

Fraudsters are leveraging AI at an unprecedented scale. Automated tools can now generate fake profiles, create synthetic identities by blending real and fabricated data, and even simulate behavioral patterns to appear legitimate.

This has significant implications for industries such as banking, fintech, e-commerce, and insurance, where document verification is a critical component of onboarding and transaction monitoring. One-time verification processes, such as traditional KYC (Know Your Customer), are no longer sufficient to mitigate these risks.

AI-driven fraud is not only more sophisticated but also more scalable. What once required manual effort can now be executed in bulk, allowing bad actors to exploit systems faster than ever before.

Limitations of Traditional Verification Methods

Most conventional document verification systems rely on static checks—document validation, database lookups, or rule-based risk scoring. While these methods are effective against basic fraud, they fall short in detecting advanced AI-driven threats.

Some key limitations include:

- Static Validation: One-time checks fail to account for changes over time or evolving risk profiles.

- Surface-Level Analysis: Traditional systems often focus on visible attributes (e.g., document authenticity) without analyzing deeper behavioral or contextual signals.

- Delayed Detection: Fraud is often identified after the damage has been done, rather than being prevented in real time.

- Siloed Data: Lack of integration across systems limits the ability to build a comprehensive risk profile.

These gaps highlight the need for a more dynamic and intelligent approach to evidence verification.

From Verification to Continuous Trust

To address these challenges, organizations must shift from static document verification to deepfake detection in documents with trust models. This involves monitoring and validating evidence throughout the entire lifecycle of a user or transaction, rather than relying on a single checkpoint.

Continuous verification leverages real-time data, behavioral analytics, and AI-driven insights to assess risk dynamically. For example:

- Monitoring user behavior to detect anomalies

- Analyzing device and network signals for inconsistencies

- Continuously updating risk scores based on new information

- Cross-referencing multiple data sources to validate authenticity

This approach not only enhances security but also improves user experience by reducing friction for legitimate users.

The Role of AI in Strengthening the Document Verification Process

While AI introduces new risks, it also offers powerful tools to combat them. When used responsibly, AI can significantly enhance evidence verification by deepfake detection in the document process.

1. Advanced Pattern Recognition

AI can analyze vast amounts of data to identify patterns that are invisible to human analysts. This includes detecting subtle inconsistencies in documents, identifying unusual user behavior, and recognizing fraud signatures.

2. Real-Time Risk Scoring

Machine learning models can assess risk in real time, enabling organizations to make faster and more accurate decisions. This is particularly valuable in high-volume environments such as financial transactions or online onboarding.

3. Digital Footprinting

AI can aggregate and analyze digital footprints—such as online presence, social signals, and historical activity—to build a comprehensive identity profile. This helps validate whether a user’s digital identity aligns with their claimed identity.

4. Synthetic Identity Detection

By analyzing data relationships and anomalies, AI can identify synthetic identities that may appear legitimate on the surface but exhibit inconsistencies at a deeper level.

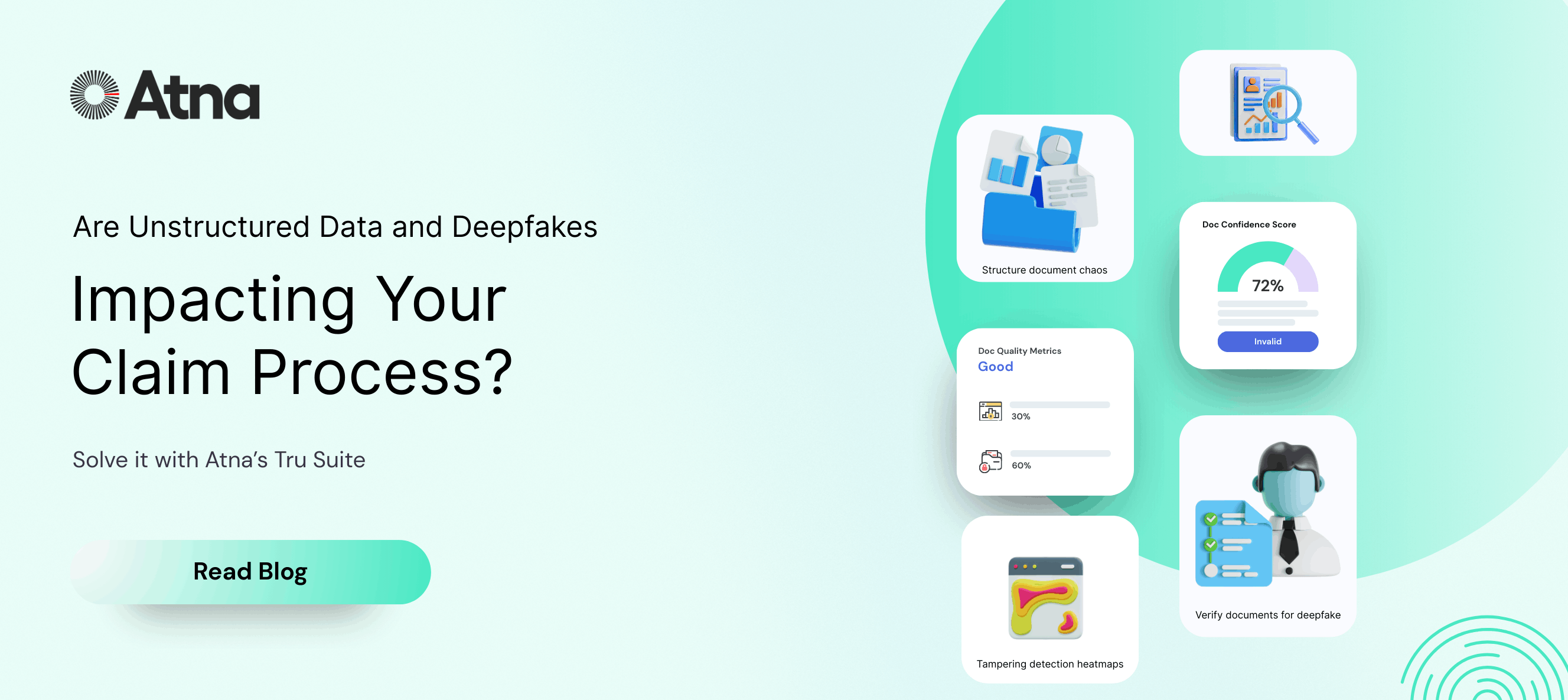

Building a Future-Ready Document Verification Framework with Atna AI

To effectively navigate the age of AI, organizations need to adopt a holistic and future-ready approach to evidence of verification. This involves integrating technology, processes, and governance into a cohesive framework. Considering all these metrics, Atna AI offers a systematic framework for document verification, especially for legal evidence checks, through its product TRU. Docs

1. Multi-Layered Verification

Relying on a single method is no longer sufficient. Organizations should implement multiple layers of verification, combining document checks, biometric authentication, behavioral analysis, and digital footprinting.

2. Real-Time Intelligence

Verification systems should be capable of processing and analyzing data in real time, enabling proactive risk mitigation rather than reactive responses.

3. Interoperability and Integration

Seamless integration across systems and data sources is essential for building a unified view of risk. APIs and modular platforms can help organizations embed document verification capabilities into existing workflows.

4. Explainability and Transparency

As AI becomes a core component of document verification, it is crucial to ensure that decision-making processes are transparent and explainable. This not only builds trust but also supports regulatory compliance.

5. Continuous Learning

AI models must be continuously updated and trained to adapt to evolving threats. This requires ongoing data collection, feedback loops, and model optimization.

Balancing Security and User Experience

One of the biggest challenges in evidence of verification is striking the right balance between security and user experience. Overly stringent verification processes can create friction, leading to customer drop-offs and dissatisfaction. On the other hand, weak verification can expose organizations to significant risks.

AI can help achieve this balance by enabling adaptive verification, where the level of scrutiny is adjusted based on the risk profile of the user or transaction. Low-risk users can enjoy a seamless experience, while high-risk cases are subjected to more rigorous checks.

Ethical and Regulatory Considerations

As document verification systems become more sophisticated, ethical and regulatory considerations become increasingly important. Issues such as data privacy, bias in AI models, and consent must be carefully addressed.

Organizations must ensure that their verification practices comply with relevant regulations and uphold ethical standards. This includes:

- Protecting user data and ensuring privacy

- Avoiding discriminatory or biased outcomes

- Providing users with transparency and control over their data

The Road Ahead

The age of AI is redefining what it means to trust evidence. As the line between real and synthetic continues to blur, organizations must move beyond traditional verification methods and embrace a more dynamic, intelligent, and continuous approach.

Evidence verification is no longer just about validating documents; it’s about establishing trust in a digital-first world. Atna AI leverages AI responsibly, adopts continuous verification models, and builds robust frameworks so that organizations can stay ahead of emerging threats while delivering secure and seamless experiences.

In this rapidly evolving landscape, the ability to verify evidence effectively will not just be a competitive advantage; it will be a fundamental requirement for survival.